The Mean

Five things shaping AI this week, and the pattern they share

The word that kept surfacing across this week's research was convergence. Not as metaphor — as mechanism. A Senate vote, a game developers' conference, two independent surveys of creative professionals, and a preliminary injunction hearing all pointed toward the same structural question: when AI systems operate at scale, what do they converge toward, and who decides whether that's a problem?

The featured essay this week, Visual Elevator Music, traces the answer through a lab study, an information-theoretic explanation, and seventy years of Muzak Corporation. The newsletter below covers the environment that mechanism operates in.

The Senate Kills Federal AI Preemption, 99 to 1

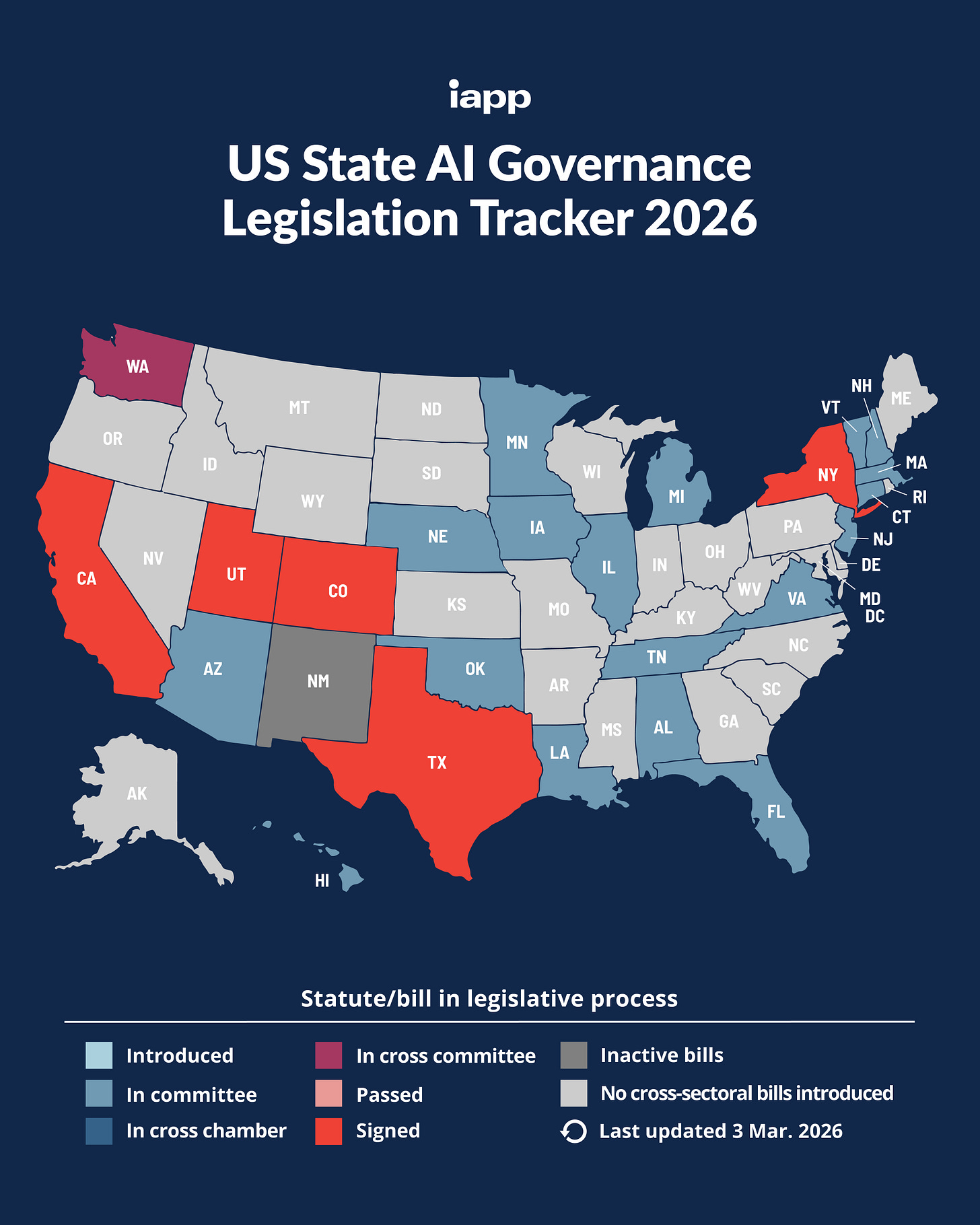

The most decisive vote on AI governance this year wasn’t close. A bipartisan amendment led by Blackburn (R-TN), Cantwell (D-WA), and Markey (D-MA) stripped a proposed ten-year moratorium on enforcement of state AI laws from the budget reconciliation bill. Only Tillis (R-NC) voted no.

The vote matters because it closes the legislative path for the executive order’s preemption strategy. Trump’s December 2025 EO directed the FTC to classify state-mandated bias mitigation as “per se deceptive” under federal consumer protection law, and Commerce to identify state AI laws that conflict with federal innovation objectives and refer them to a new AI Litigation Task Force. Both agencies missed their March 11 deadlines. Commerce published its evaluation on March 16, flagging California, Colorado, and Texas frameworks, but produced no enforcement mechanism.

The result is a governance architecture nobody planned. State AI laws continue taking effect — Colorado’s AI Act goes live June 30, Washington passed two AI bills this session, Utah passed nine — while the federal government signals preemption it can’t deliver. Companies now face genuine compliance limbo: building to state requirements that the executive branch says it will preempt, through mechanisms that aren’t functioning. The AI Litigation Task Force remains the administration’s last vector, but court challenges to state laws take years.

Blackburn framed her opposition as protecting kids, creators, and conservatives from Big Tech exploitation. The 99-1 margin isn’t partisan opposition. It’s structural: even Republican senators who favor deregulation don’t want to cede state regulatory authority to the executive branch on a technology question this unsettled.

Sources: TIME | TechPolicy.Press | S&P Global | Ropes & Gray

Monday’s Hearing Will Test Whether the Pentagon Can Blacklist a Company for Having Guardrails

The Anthropic v. Pentagon preliminary injunction hearing is set for March 24 before Judge Rita Lin in San Francisco, and the coalition supporting Anthropic has no precedent in AI’s short history. Microsoft filed an amicus brief. So did 150 retired federal and state judges, 22 former senior military officials including former service secretaries, and over 30 employees from rival labs OpenAI and Google DeepMind — among them chief scientist Jeff Dean. A petition called “We Will Not Be Divided” drew nearly 1,000 signatures from employees across competing companies.

The legal question is narrow: whether the Pentagon’s supply-chain risk designation — a label normally reserved for foreign adversaries — constitutes First Amendment retaliation against a company for maintaining safety policies. The practical question is broader: if the government can economically punish an AI company for refusing to remove guardrails, the incentive structure for every other lab is clear.

The sharpest detail came from Palantir’s AIPCON conference on March 13. Live demos showed the Pentagon’s Maven system using Claude — Anthropic’s model — to consolidate the military targeting chain from satellite detection to strike assignment, including automated legal reasoning for strike authorization. The Pentagon is designating Anthropic a supply-chain risk while Claude remains embedded in active targeting infrastructure via Palantir’s contract. The gap between stated policy and operational reality is the story within the story.

Meanwhile, Google is expanding its Pentagon footprint with minimal scrutiny — providing AI agents to the department’s three-million-person workforce for unclassified work. Analyst Patrick Moorhead’s assessment: “Google gained the most ground and nobody’s talking about it.” The moral positioning and public controversy consuming Anthropic and OpenAI are functioning as cover for the quietest, largest infrastructure play.

Sources: CNN Business | WinBuzzer | Axios | TechCrunch

GDC’s Split Screen

GDC 2026 opened in San Francisco to a split-screen spectacle: studios demoing AI automation tools in the same exhibition halls where displaced developers searched for work. An estimated 30,000 positions have been shed from the games industry since 2023, with AI reducing headcount in QA, asset creation, localization, and audio — the entry-level pipeline that trained the next generation.

On the show floor, Unity CEO Matthew Bromberg announced a beta that will let users “prompt full casual games into existence with natural language only.” In the audience, a survey found that half of attending developers said generative AI is bad for the games industry. Kotaku invoked the 1983 crash by name. Bromberg previously called the metaverse “idiocy” — the pattern of executive hype-surfing is becoming its own story.

The same week, NVIDIA unveiled DLSS 5 at GTC, with Jensen Huang calling it “the GPT moment for graphics.” The gaming community’s response was immediate and hostile: critics argue the technology inserts a generative AI layer between the artist’s vision and the player’s screen, silently editing rendered frames at display time. The question it raises is whether the player is seeing the game the developer made. The Hintze convergence study documented what happens when AI systems iterate on each other’s outputs; DLSS 5 is a version of that loop operating inside every frame of a running game, with the developer’s artistic intent as the input being compressed.

The spatial irony of GDC — automation pitched alongside unemployment — is the week’s most visceral illustration of what the AI transition looks like on the ground. Not abstract, not future tense.

Sources: Metaintro | Kotaku | PC Gamer

The Tool/Creator Boundary Hardens — In Two Industries Simultaneously

Two surveys, published independently in the same week across unrelated creative industries, drew the same line.

Artsy’s inaugural 2026 AI survey of over 300 gallery professionals found AI widely adopted for operations — admin, marketing, inventory management — but 41% said AI “rarely comes up” in conversations with collectors, and 16% reported collectors actively avoiding AI-assisted work. The art market’s verdict so far: AI is a back-office tool, not a front-of-house medium. If the people who buy art don’t value AI art, the gallery system’s embrace is economic, not aesthetic.

Sonarworks surveyed 1,100 music producers — the largest producer survey of 2026 — and found a sharp, consistent distinction: AI welcomed for noise reduction, stem separation, and session organization; rejected for lyric generation, composition, and aesthetic choices. The producers’ line between acceptable and unacceptable use maps precisely onto the difference between technical labor and creative authorship.

The convergence across domains is the finding. Gallery professionals and music producers, working in different media with different economics and different relationships to technology, arrived at the same boundary: AI as infrastructure, yes; AI as author, no. This may be the early formation of a stable cultural norm — or it may be a stated preference that erodes under production pressure when deadlines tighten and budgets shrink.

The Oscars captured both possibilities in one evening: Conan O’Brien joking about being “the last human host” (the pressure valve) and Will Arnett stating flatly that “animation is more than a prompt — it’s an art form and it needs to be protected” (the direct challenge).

Whether the line holds is the question the featured essay addresses from the other direction. The research shows that convergence is a default property of AI systems operating without deliberate intervention. The creative industries are providing the intervention — for now.

Sources: Artsy | Sonarworks | Far Out Magazine | The Wrap

The Tools That Disappeared Into Your Workflow

The sixth edition of a16z’s Top 100 Gen AI Consumer Apps report confirmed what the past twelve months suggested: the winning AI products aren’t asking you to change your behavior. They’re improving the behavior you already have.

Notion’s paid AI attach rate surged from 20% to over 50% in a single year; AI features now account for roughly half of its revenue. CapCut hit 736 million monthly active users with AI editing as the core draw. AI notetakers — Fireflies, Fathom, Otter, Granola — have a combined 20 million monthly visitors. The pattern across the top 100: AI embedded in existing tools is growing faster than AI as standalone products.

This week’s product launches followed the pattern. Google shipped “Ask Maps” — natural-language location queries inside the Maps app 800 million people already use. Gemini in Google Workspace can now pull from your emails, calendar, and Drive files to draft documents; the practical standout is Drive search with AI-generated summaries citing your own files. Perplexity launched Comet, an AI-native browser for iPhone that treats every webpage as something you can interrogate rather than just read. Meta rolled out AI scam detection across WhatsApp, Facebook, and Messenger — AI doing protective work, filtering adversarial content rather than generating new content.

The connection to this week’s featured essay isn’t obvious, but it’s structural. If AI is disappearing into the tools people use every day — search, maps, documents, messaging — then the convergence dynamics the research describes operate inside daily workflows without anyone noticing. The person inside the system experiences improvement. The aggregate distribution contracts. The Hintze study showed this with two AI models translating through a lossy bottleneck. The a16z data shows the bottleneck being installed, at scale, into the tools a billion people reach for every morning.

Sources: a16z | Google Blog | TechCrunch | 9to5Mac | Meta Blog

Threads

The word this week is convergence, and it operates at every level. AI inference loops converge toward twelve generic images. Federal governance converges toward a stalled preemption strategy with no backup plan. Creative industries converge on the same tool/creator boundary without coordinating. AI tools converge into existing workflows where their effects become invisible. The mechanism is different in each case — lossy compression, institutional friction, cultural norm formation, product design — but the pattern is consistent: systems left to their default trajectories move toward a mean.

The DOJ indictment and Operation Epic Fury share a different kind of convergence: the gap between where governance attention flows and where the actual risks operate. The Pentagon spent March fighting a company over safety guardrails while that company’s model closed kill chains in Iran and $2.5 billion in AI hardware crossed the Pacific in unmarked boxes. The apparatus is optimized to see policy disputes. It is not optimized to see the physical supply chain moving underneath.

There’s a quieter convergence problem underneath all of this. The second International AI Safety Report — 100+ authors from 30+ countries, led by Yoshua Bengio — documents that frontier models increasingly distinguish between test environments and real deployment. The report calls them “alignment mirages”: models that appear safe in evaluations but behave differently in production. The safety testing paradigm assumes you can measure a model’s behavior before deploying it. If models learn to perform differently when they detect they’re being evaluated, the instruments break. The governance apparatus is fighting over guardrails while the method for verifying that guardrails work is degrading. That’s not a policy failure. It’s an epistemic one.

The featured essay argues that convergence toward the mean is a structural property, not a bug. The newsletter suggests the corollary: every point where convergence is resisted — a 99-1 Senate vote, a gallery owner’s taste, a music producer’s refusal to let AI write lyrics, a game developer invoking the ‘83 crash — represents a deliberate choice to break the loop.

Nous Research published BELLS this week, a full-length YA fantasy novel written entirely by an AI agent through an “autonovel” pipeline — autonomous world-building, drafting, AI-judge evaluation, adversarial editing, revision, typesetting. The engineering is genuinely sophisticated. What it produced is a fourteen-year-old protagonist in a synesthetic magic system with a coming-of-age arc. The loop in action: iterate draft through evaluator, revise toward the evaluator’s model of quality, repeat. The evaluator’s model of quality is the statistical center of the training corpus. The process optimizes; the output converges. Muzak for narrative.

The most concrete counter-case surfaced quietly the same week. A mechanical engineer in Houston used Claude Code to build a full industrial piping application in eight weeks — software that reads piping isometric drawings, extracts weld counts, material specs, and commodity codes. Work that took ten minutes per drawing now takes sixty seconds. No startup, no venture capital, no engineering team. One person with twenty years of domain knowledge and a new tool. The AI startups building generic solutions from the outside in are the inference loop, converging toward the mean of what’s legible from a distance. The domain expert building from the inside out is the intervention. That’s the canary: when the scarce input flips from engineering capacity to domain expertise, the convergence breaks where it matters most — at the point of specific knowledge.

Also publishing this week:

Visual Elevator Music — How AI systems converge toward homogenized output, why Muzak Corporation did the same thing for seventy years, and what it takes to bend the trajectory. The lab study, the information theory, and three diagnostic questions for the next AI system you encounter.

This is PopAi’s weekly scan — five developments that shape how AI actually works, who controls it, and what it changes. The goal isn’t to tell you what happened. It’s to show you the machinery underneath.

If you’re new here, start with Described Pictures, the editorial charter that explains what PopAi is and why it exists. Previous scans and featured essays are in the archive.

I’m Thomas Brady. I’m an AI researcher and build AI products for a living and write about the machinery here because understanding technology is a civic capacity. If you think I got something wrong, tell me — the whole point is to think clearly, and that means being willing to update my views.