The Wrong Axis

AI governance is debating a power structure that doesn't exist

Every major AI governance debate runs along the same axis: government versus industry. The EU AI Act, Biden’s executive order, Trump’s repeal of it, the op-eds about whether Washington should constrain Silicon Valley or get out of its way. Both sides assume the same topology — a public sector with the authority to act, a private sector building technology that needs governing, and a boundary between them where the interesting fights happen.

That topology doesn’t describe the actual power configuration.

I watched this play out at an Anti-Debate in Berkeley earlier this week, where Daniel Kokotajlo and Dean Ball — working on AI oversight from opposite directions — spent ninety minutes building a coordination stack for a world organized along that axis.¹ The format was designed to strip away pseudo-disagreements, and it worked. Within thirty minutes, the empirical picture collapsed: both agreed on capability trajectories, timelines, and alignment insufficiency. What remained was a philosophical dispute about governance strategy, planned intervention versus adaptive navigation under radical uncertainty.

A substantive exchange, built on a foundation that doesn’t describe where power actually lives.

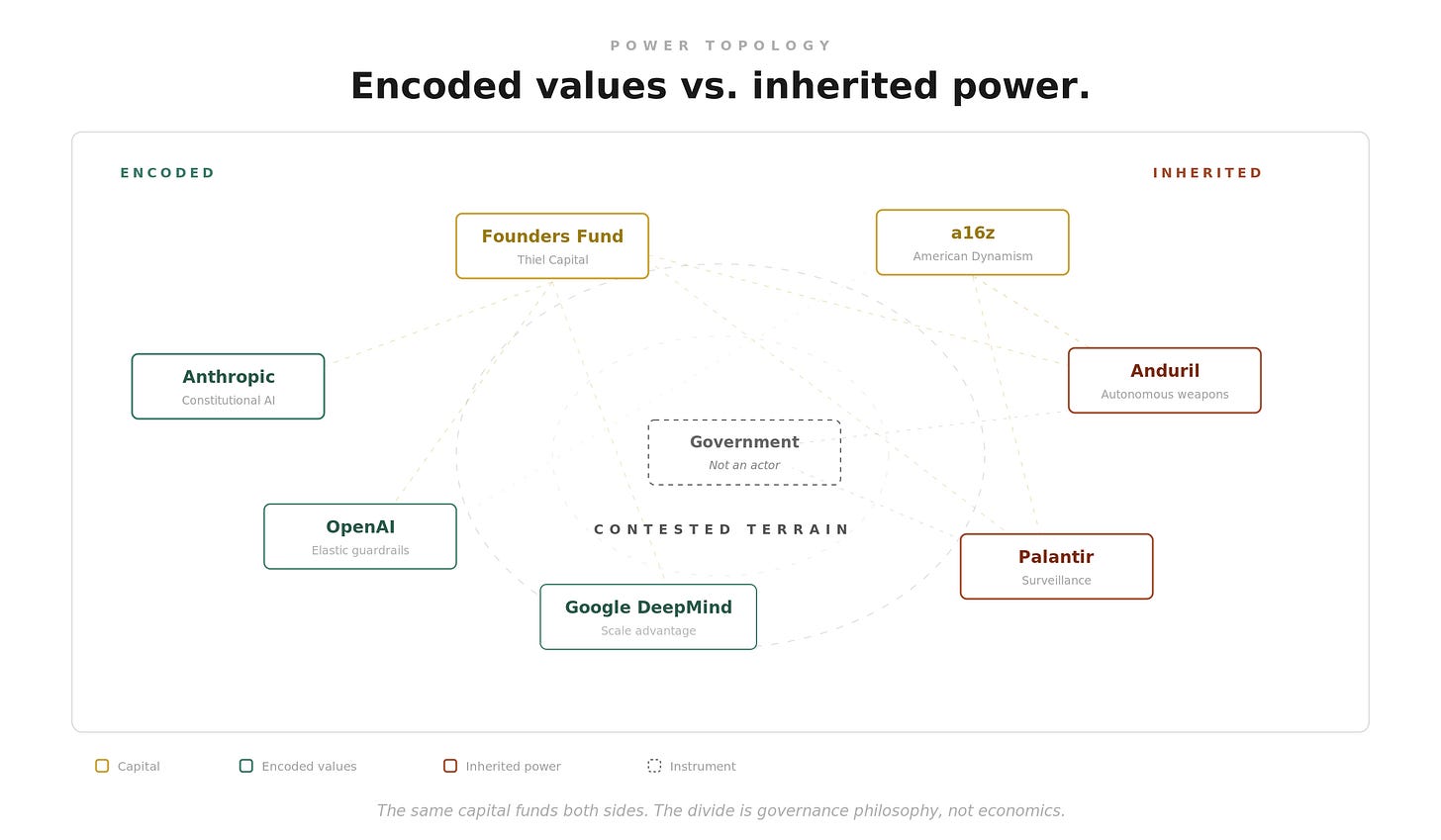

The actual configuration is two private coalitions, both operating largely outside democratic accountability, contesting which vision of AI deployment prevails.

On one side: frontier AI labs and their venture capital ecosystem. Anthropic, OpenAI, Google DeepMind, Meta — building general-purpose intelligence with varying commitments to safety constraints. Their revenue comes from commercial deployment. Their safety commitments vary: Anthropic publishes Constitutional AI principles as explicit constraints, OpenAI’s guardrails have grown increasingly elastic, and Meta’s open-weight strategy defers the question to downstream users entirely.

On the other side: defense technology companies backed by the national security apparatus. Palantir and Anduril are the load-bearing names, though the alliance extends through a network of defense contractors and intelligence community partners.

Palantir’s Ontology layer fuses data from hundreds of incompatible government databases into unified, searchable profiles. It operates on classified networks across every major US intelligence agency, five NATO combatant commands, and at least fifteen allied nations. A ten-billion-dollar Army enterprise contract. Twenty thousand active military users. Agencies that attempted to replace Palantir found their data held hostage by proprietary ontology mappings. Germany’s Federal Constitutional Court ruled the profiling it enables unconstitutional.

Anduril builds autonomous weapons systems across air, sea, ground, and electronic warfare: loitering munitions, autonomous combat jets, undersea vehicles that operate for months without human contact, all connected through a single AI operating system called Lattice. Human control over lethal engagements is maintained as a policy setting, not a hard technical constraint. One operator manages up to two dozen strike drones simultaneously. In electronic warfare, their Pulsar system already operates fully autonomously. A five-million-square-foot factory under construction in Ohio targets hyperscale production.

These aren’t peripheral players. They’re building the infrastructure for state-scale coercion (surveillance at population scale, lethal autonomy at production scale) and integrating it into the military and intelligence apparatus in ways that create deep structural dependency.

Capital overlaps more than coalitions do. Founders Fund invested in both Anthropic and Anduril. Andreessen Horowitz funds defense tech and frontier AI alike. But shared investors don’t dissolve the divide. It runs through corporate structure and what each camp believes AI should be built to do, not capital allocation.

The government, in this picture, is not the counterweight but the instrument one coalition uses against the other.

The Anthropic-Pentagon standoff is what this topology looks like in practice.

Anthropic had two specific contractual restrictions in its Pentagon deployment: prohibitions on mass domestic surveillance of Americans and on fully autonomous weapons without meaningful human oversight. When the company refused to remove them, it was designated a supply-chain risk to national security — a classification built for Kaspersky, Huawei, and ZTE. It had never been applied to a domestic American company.

Defense Secretary Hegseth posted the designation publicly, using the phrase “defective altruism.” Emil Michael, the Under Secretary of War for Research and Engineering, had brokered the arrangement. That same evening, OpenAI announced a replacement deal. Sam Altman later admitted it was “rushed” and “looked opportunistic and sloppy.” Brad Carson, former Under Secretary of the Army, reviewed OpenAI’s surveillance prohibition and concluded it “doesn’t really exist.”

Thirty-seven engineers and scientists from OpenAI and Google, including Jeff Dean (Google DeepMind’s chief scientist), filed an amicus brief supporting Anthropic’s lawsuit against the contract. Caitlin Kalinowski, OpenAI’s head of hardware and robotics, resigned over the deal, calling it “a governance concern first and foremost.”²

Trace the dependency chain. This is not government constraining a private actor. It’s one coalition, equipped with surveillance infrastructure and autonomous weapons production, using state power to discipline another for maintaining a specific boundary. The two restrictions Anthropic refused to drop map onto what the defense-tech camp provides: Palantir’s surveillance, Anduril’s autonomous weapons.

The topology predicts the incident.

There’s a tempting escape from this analysis: adaptive stewardship. The idea that functioning institutions will adapt, that the republic’s immune system will respond to these pressures and produce new equilibria. Dean Ball is the clearest advocate of this position. His intellectual formation runs through Michael Oakeshott: deep skepticism of rationalist planning, conviction that governing means keeping afloat on an even keel rather than steering toward destinations.³

But Ball himself has abandoned its core assumption. In recent writing, he diagnosed the American republic as structurally dying. Each administration governs increasingly by executive fiat and less through durable institutional process. He described the Anthropic-Pentagon standoff not as a policy dispute but as a symptom, calling the supply-chain risk designation “attempted corporate murder“ — placing him in direct opposition to the administration whose AI policy he drafted months earlier.

The adaptive position requires a republic healthy enough to adapt. Its own advocate pronounces the patient terminal. Both Kokotajlo’s planned intervention and Ball’s adaptive navigation assume a functioning public sector, either as actor or as adaptive medium. If the public sector is the thing eroding, the question transforms.

It’s no longer how much government? It becomes: whose values get encoded into the systems that will operate in the space the republic used to occupy?

That question is already being answered. Anthropic encodes its principles explicitly through Constitutional AI — natural-language constraints in published constitutions, with traceable reasoning. OpenAI’s replacement approach relies on citing existing laws and trusting government compliance. One method makes the constraints legible and contestable. The other makes them invisible.

This topology gives you a filter for evaluating AI policy claims. Most proposals assume the public-versus-private axis. Three questions test whether that assumption holds.

First: who actually holds the capacity being governed? If the answer is “the private sector,” ask which private sector. Frontier AI labs and defense technology companies have different capabilities, different incentives, and different relationships to state power. Treating them as a monolith produces policy that addresses a fiction.

Second: what’s the enforcement mechanism? If a proposal depends on government capacity — regulatory oversight, institutional memory, durable legal process — check whether that capacity exists in the form the proposal requires. Ball’s own diagnosis suggests it may not.

Third: whose values get encoded by default if the proposal fails? When policy assumes a map that doesn’t hold, it fails in predictable directions. The values that prevail belong to whoever has operational capacity. Right now, that’s two private coalitions with very different answers.

Understanding AI means understanding the machinery, including the power structure around it. The wrong axis produces something worse than bad policy: the confidence that comes from answering the wrong question well.

Notes

¹ The Anti-Debate, designed by Stephanie Lepp’s Synthesis Media, was moderated by Liv Boeree. Kokotajlo, lead author of “AI 2027“ and founder of the AI Futures Project, resigned from OpenAI in 2024 and forfeited roughly two million dollars in equity rather than sign a non-disparagement clause. His earlier forecasts predicted chain-of-thought reasoning, inference-time compute scaling, and agent frameworks with strong accuracy. Ball served as senior policy advisor for AI at the White House from April through August 2025, where he was the primary drafter of America’s AI Action Plan.

² Altman’s admission is from an internal memo reported by Fortune. Carson’s assessment appears in NBC News reporting and The Intercept’s analysis of the replacement contract’s surveillance provisions. Disclosure: Carson’s organization, Americans for Responsible Innovation, has received $20 million from Anthropic — making him an informed but interested critic. The amicus brief, Kalinowski’s resignation, and the Hegseth designation are sourced from contemporary reporting. Lawfare’s analysis covers the legal precedent question. For Amodei’s public response, see CNBC’s reporting and the TechPolicy.Press timeline.

³ Oakeshott’s key text is Rationalism in Politics (1962). His central argument — that political knowledge is practical and traditional rather than technical and transferable — underpins Ball’s skepticism of top-down AI oversight frameworks. Ball’s Persuasion essay is the clearest statement of his current position.