The Ratchet

AI structurally favors authoritarian applications. The question is what we do with the window that's closing.

Part 3 of The Wrong Axis series

In Parts 1 and 2, I mapped a topology and a network: two private coalitions contesting whose values govern AI deployment, and a venture capital pipeline that placed its members inside the agencies controlling procurement, hiring, and regulatory policy. This essay addresses the argument beneath the proxy war — the one that survives regardless of which coalition prevails or which administration holds power.

There is a harder argument beneath the proxy war, one that neither coalition’s partisans want to confront. AI structurally favors authoritarian applications — not because of who builds it, but because of what it is.

The legal infrastructure for mass surveillance already exists. Under the third-party doctrine, Americans have no Fourth Amendment protection over data voluntarily shared with banks, phone carriers, ISPs, or email providers. The government can obtain and read this data in bulk without a warrant. The constraint has never been legal. It has been human: no agency has the manpower to monitor every camera feed, cross-reference every transaction, read every message. AI removes that constraint.

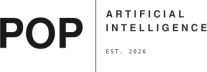

Dwarkesh Patel estimates the cost to process every CCTV camera in America — a hundred million of them — at roughly thirty billion dollars today, dropping tenfold annually.¹ By the end of this decade, comprehensive real-time surveillance of the entire country will cost less than a building renovation. The capability gap between what surveillance law permits and what surveillance practice achieves has been enormous for decades. AI closes it — not as a warning about some future administration, but as a cost curve already falling.

And the receiving architecture already exists. Palantir’s Ontology layer resolves identities across databases that were never designed to interoperate. A “Person” object pulls its name from HR records, its vehicle from DMV data, its location from cell towers, its finances from banking records. Through FALCON and ICM, a single ICE agent already has access to passport records, Social Security files, IRS data, student visa records including biometrics, license plate detections from a commercial database of over seven billion scans, and telecommunications metadata. Frontier AI does not add a new capability to this infrastructure. It adds speed: the ability to process, correlate, and act on the full volume of data these systems already collect. The NGA director has disclosed that the agency is already circulating intelligence products that are “100 percent machine-generated” — not touched by human hands. This is not a science fiction projection. It is operational practice.

The proxy war between the two coalitions is real, and it matters. But it plays out against a technological substrate that makes authoritarian use the path of least resistance regardless of who prevails.

Max Weber defined the state as the entity with the legitimate monopoly on the use of force. When a state fails, that monopoly does not disappear. It disperses — or transfers.

In September 2022, Ukrainian submarine drones armed with explosives approached the Russian Black Sea Fleet near Sevastopol, guided by Starlink satellite communications. The drones lost connectivity and washed ashore. Elon Musk had geofenced Starlink coverage within a hundred kilometers of the Crimean coast. When Ukrainian officials made an emergency request to activate coverage for the attack, Musk refused, stating that compliance would have made SpaceX “explicitly complicit in a major act of war.” The Senate Armed Services Committee opened a probe. Senator Elizabeth Warren called for an investigation into whether “foreign policy is conducted by the government and not by one billionaire.”

Whatever one thinks of the decision, the fact underneath it is this: a private citizen controlling communications infrastructure that a sovereign military depended on exercised a veto over a wartime naval operation. The monopoly on violence had already found a new host. This was before any of the autonomous weapons systems now under contract were operational.

Look at what is coming online. Anduril’s Fury autonomous combat jet went from clean-sheet design to first flight in 556 days. It is one of two winners of an $8.9 billion Air Force collaborative combat aircraft program, with production beginning mid-2026 at Arsenal-1 in Ohio. In February, the YFQ-44A completed its first captive carry test with a live AIM-120 missile and flew with both Shield AI’s Hivemind and Anduril’s Lattice autonomy stacks in a single flight — the Air Force now calls it a “fighter drone.” The CCA budget is projected to nearly double, from $804 million to $1.7 billion in FY2027. The Pulsar electronic warfare system already operates in fully autonomous engagement mode, ingesting the spectrum, identifying threats, and jamming drone control links without human authorization for each action. Lattice Mesh, Anduril’s coordination layer, received a $100 million contract to scale as DOD-wide infrastructure. One operator can now command swarms of heterogeneous autonomous systems across air, ground, and sea. This is not prospective. In the Iran campaign, Maven-based AI targeting systems hit a thousand targets in the first twenty-four hours — twice the pace of Iraq’s shock-and-awe — and five thousand in ten days. The Pentagon has since officially confirmed the use of “advanced AI tools” in the campaign, and a Nature investigation independently corroborated the targeting tempo. The military is now working toward a thousand targets per hour: a tempo at which per-target human review becomes a physical impossibility, not a policy choice.

Across every Anduril weapons system, human authorization for lethal engagement is a software setting, not a hardware constraint. The technology is built to operate with minimal human input. The human approval requirement is a policy layer that can be changed by updating rules of engagement, invoking the “urgent military need” waiver in DOD Directive 3000.09, or adjusting Lattice’s configuration. When Anduril co-founder Trae Stephens said there would always be a “responsible party in the loop,” his spokesperson clarified that he “didn’t mean that a human should always make the call, but just that someone is accountable” — accountability after the fact, not authorization before it.

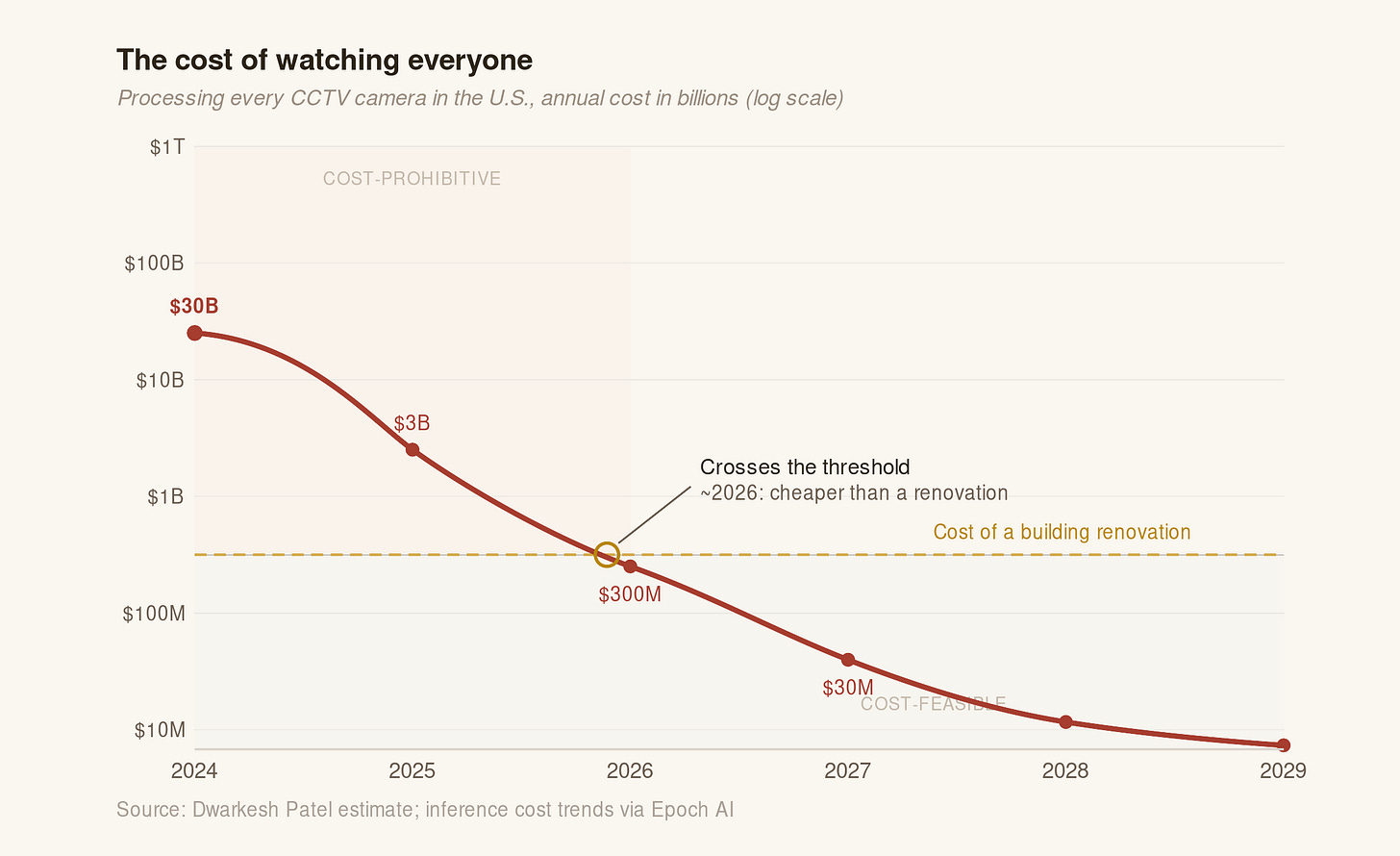

The irreversibility is the point. Every weapons system deployed, every surveillance integration completed, every proprietary ontology mapping that locks an agency into a single vendor creates a one-way dependency. A subsequent administration can replace the appointees. It cannot un-deploy the autonomous fleet, un-fuse the surveillance architecture, or reconstruct the institutional knowledge that was eliminated to make room for these systems. The ratchet turns one way.

Even the strongest actors in the opposing coalition are bending under the pressure.

A TIME profile of Anthropic revealed that the company rewrote its Responsible Scaling Policy in late February, dropping binding commitments to pause development if safety guarantees could not be made. Jared Kaplan, the chief science officer, called the original approach “naive.” Dave Orr, the head of safeguards, offered a metaphor: “We’re driving down a cliff road. A mistake will kill you. Now we’re driving at 75 instead of 25.” Evan Hubinger, Anthropic’s head of alignment stress-testing — who had aggressively defended the RSP’s binding nature through 2023 and 2024 — conceded: “You should downweight the theory of change of RSPs now. The theory of change for RSPs heavily depends on them translating into regulation. That is now extremely unlikely to happen.”

Anthropic held two red lines on autonomous weapons and mass surveillance. It paid for holding them. It is now moving faster on everything else.

OpenAI’s trajectory is more severe. In May 2024, Ilya Sutskever and Jan Leike left the Superalignment Team. Leike stated publicly that “safety culture and processes have taken a backseat to shiny products.” Five months later, Miles Brundage departed the AGI Readiness Team, stating “neither OpenAI nor any other frontier lab is ready.” Daniel Kokotajlo resigned from the governance team and forfeited an estimated $1.7 to $2 million in vested equity by refusing to sign a non-disparagement agreement. Mira Murati, the CTO, departed. John Schulman, co-founder and RLHF pioneer, left for Anthropic.

Then the teams themselves disappeared. The Superalignment Team was dissolved. The AGI Readiness Team was dissolved. On February 11, 2026, the Mission Alignment Team followed — its leader Joshua Achiam reassigned to “Chief Futurist.” Two weeks later, the Pentagon deal was announced. As of late 2025, the Head of Preparedness role was still vacant, three leaders having rotated through in eighteen months.

OpenAI’s own IRS filing tells the story in compressed form. The 2024 Form 990 changed the organization’s mission from building AI that “safely benefits humanity, unconstrained by a need to generate financial return” to ensuring AGI “benefits all of humanity.” Both “safely” and “unconstrained by a need to generate financial return” were removed. When California proposed binding safety legislation that largely mirrored OpenAI’s own voluntary commitments, OpenAI lobbied against it. Former whistleblowers issued a public statement questioning “the strength of those commitments.”²

The competitive pressure is a race to the bottom. One camp is rewarded with contracts for removing safety constraints; the other is punished for maintaining them. OpenAI employee Leo Gao described the Pentagon deal's safeguards as "not really operative except as window dressing." When the DOD offered a $100 million prize challenge for translating natural-language commands into autonomous drone swarm operations, Anthropic bid and was not selected; OpenAI-based bids advanced. The attractor bends everyone.

And yet.

On March 9, thirty-seven engineers and scientists from Google and OpenAI — including Jeff Dean, Google DeepMind’s chief scientist — filed an amicus brief arguing that current AI systems “cannot safely or reliably handle fully autonomous lethal targeting” and that the supply-chain designation was “an improper and arbitrary use of power.” Over a thousand employees across Anthropic, OpenAI, and Google DeepMind signed a cross-company petition called “We Will Not Be Divided”, calling on their employers to reject surveillance contracts. Caitlin Kalinowski resigned from OpenAI, stating that the issues at stake “deserved more deliberation than they got.”

Corporate red lines are a holding action — and a porous one. Despite Anthropic’s blacklisting from federal procurement, its LLMs remained embedded in operational military systems through existing integrations that the company could not fully unwind. The strongest corporate refusal in the industry did not actually remove the technology from the pipeline. Within twelve to twenty-four months, open-source models will be capable enough for surveillance applications. The government will not need Anthropic’s cooperation or anyone else’s. The proxy war between the two coalitions matters, but it operates within a closing window.

It matters anyway, for a specific reason: initial conditions create path dependence. The norms established now — which uses are acceptable, which constraints are non-negotiable, what the public expects — shape the political landscape that future actors inherit. Anthropic’s refusal did not prevent mass surveillance. It established that mass surveillance is something a company should refuse. That precedent is fragile, and the network inside the machinery is working to break it. But the precedent exists, and in a world where the technology will soon be available to anyone with a budget, norms may be the only durable constraint available.

This is not a story about good companies and bad companies. It is a story about a system: a failing republic, two private succession claimants, a set of technologies that favor the worst applications of state power by default, and a closing window in which the precedents that might constrain those applications are being set. The monopoly on violence is migrating. The technology it migrates into is not neutral. And the people setting the terms of that migration were never elected.

The proxy war is invisible. The ratchet is not.

¹ Patel’s analysis draws on current GPU inference costs and the existing CCTV infrastructure. The tenfold annual drop tracks with broader trends in inference pricing since 2023.

² The SB 1047 episode is instructive beyond the political fact. The bill’s requirements — pre-deployment safety testing, incident reporting, kill-switch capability — were close to what OpenAI’s own voluntary framework had promised. Opposing the legislative version of your own voluntary commitments is a clean signal about the strength of voluntary commitments.