The Accidental Frontier

The physics driving datacenter AI and consumer hardware toward the same design point — and what it means for who gets access.

Alibaba’s Qwen 3.5 has 397 billion parameters distributed across 512 expert networks.1 On any given token, it activates 17 billion of those parameters and leaves the rest idle — a design called Mixture of Experts, where the model routes each input to a small subset of specialized subnetworks rather than running the full model every time. At a standard compression level, each token requires reading 8.5 gigabytes of model weights from memory. On Apple’s upcoming M5 Ultra desktop, with a projected 1,228 GB/s of memory bandwidth and 256 GB of unified memory,2 the arithmetic works out to roughly 65 tokens per second. Conversational speed. A frontier-class model, on a single machine, with no cloud dependency.

The model was designed in Hangzhou for datacenter training economics. The chip was designed in Cupertino for laptop performance. Nobody in either organization coordinated with the other. The alignment is accidental. And the accident has implications that extend well beyond hardware benchmarks.

The convergence nobody planned

The conventional framing treats on-device AI as a downstream application of datacenter research: labs train large models, then engineers compress them to fit on phones and laptops. The compression is where the compromise lives — smaller, less capable, adequate for autocomplete and notification summaries.

That framing is becoming wrong. I traced the full evidence this week in a structural analysis of the inference stack, from silicon through model architecture: From Transistor to Token. The short version: the physical constraints shaping datacenter economics and the constraints shaping consumer hardware are converging, and architectures designed to navigate one set of limits have emergent properties that fit the other.

The mechanism is specific. The dominant bottleneck in both environments is memory bandwidth per token — how fast you can read model weights from memory during text generation. A datacenter operator serving a thousand concurrent users from a GPU cluster and a laptop user running one model locally face the same fundamental problem: each generated token requires a pass through the active model weights, and the speed of that pass is gated by memory reads, not compute.

Model architects are responding with designs that read fewer bytes per token. Qwen 3.5 replaces three-quarters of its standard attention layers with Gated DeltaNet, a recurrent mechanism that maintains a fixed-size state matrix instead of a key-value cache that grows with every token processed.3 The practical consequence: at 128,000 tokens of context, the DeltaNet layers require 25 megabytes of memory. The standard attention layers require 52 gigabytes. A 2,000× difference. The 3:1 ratio of DeltaNet to attention layers was empirically tuned for quality — it achieves the lowest validation loss of any ratio tested4 — and it simultaneously makes 128K context fit in 128 GB of consumer memory. The architecture was optimized for one thing and achieved another.

Meanwhile, Apple’s M5 silicon moved in a complementary direction. The dedicated Neural Engine — 16 fixed cores, unchanged from the prior generation — got one sentence in the press release.5 The architectural move was embedding neural accelerators directly into GPU cores, so AI compute now scales with the chip: 10 accelerators on the M5, 40 on the M5 Max. Apple dropped its longstanding TOPS metric entirely, replacing it with “4× peak GPU compute for AI.” The unit of measurement changed because the hardware target changed.

Neither organization designed for the other’s constraints. Both designed for bandwidth efficiency because bandwidth is the shared physical bottleneck. Physics-driven trends tend to be durable. This one is accelerating: Kimi Linear, Google’s RecurrentGemma, and IBM’s Granite 4.0 all independently arrived at similar hybrid attention architectures, each tuned for datacenter performance, each carrying the same emergent fit for consumer hardware.6

What the numbers mean in practice

But does “frontier model on a desktop” translate into something that changes how people work? Or does it remain an impressive benchmark for hardware enthusiasts?

Start with what shipping hardware can do today. The M5 Max — available now at $5,000 for the 128 GB configuration — runs a 70-billion-parameter model at roughly 10 tokens per second through one popular runtime.7 Choose MLX, Apple’s open-source research framework, and the same model on the same chip reaches an estimated 22–32 tokens per second, because MLX eliminates data-copying overhead through zero-copy unified memory access.8 Same chip, same model, same compression level, 2–3× variation in measured speed depending on which software layer sits between you and the silicon. Most benchmark discussions collapse this variation into a single number.

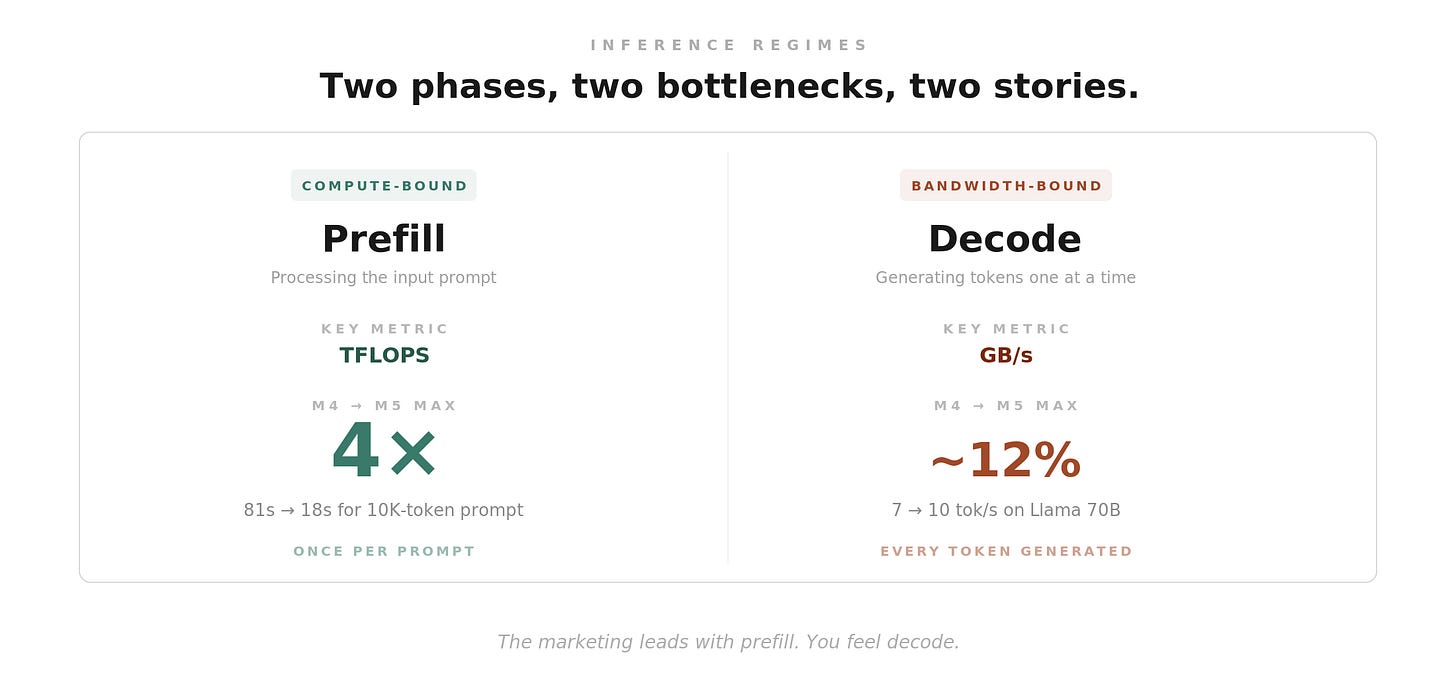

The deeper distinction is between the two phases of text generation. Prefill — processing the input prompt — is compute-bound: more processing power means faster prefill, and the M5 Max delivers a 4× improvement over its predecessor. A 10,000-token prompt that took 81 seconds drops to 18.9 Decode — generating tokens one at a time — is bandwidth-bound, and the improvement is 12%. The marketing headline leads with prefill because the number is bigger. Decode determines the ongoing experience.

This split maps directly onto use cases. For agentic workflows that process large context windows, for retrieval pipelines that ingest long documents, the prefill improvement is transformative: tool-use patterns with rich context become practical on local hardware for the first time. A system prompt plus a full research paper plus a set of tools, processed in under twenty seconds rather than over a minute. For conversational back-and-forth, the decode improvement is real but modest — both the old and new speeds fall below the threshold where output feels fluid.

The most radical near-term application sits further up the stack. Andrej Karpathy’s autoresearch pattern — a small Python program that proposes model modifications, trains for five minutes, evaluates against a held-out metric, and keeps the change only if performance improved10 — runs at 8–9 experiments per hour on Apple Silicon.11 On an H100, the standard cloud GPU for AI research, it runs at 12 experiments per hour. The throughput gap is modest. The cost gap is not: the H100 runs $2–3 per hour of cloud access. The MacBook Pro runs on electricity.

When an MLX port ran the same algorithm on Apple Silicon, it found fundamentally different optimal architectures than the H100 — shallow, wide networks rather than deep ones — because lower throughput per iteration forced each training step to extract more learning.11 The loop did not know this in advance. It discovered it by running on the substrate.

Autonomous experimentation on consumer hardware is not theoretical. It is running. But it has hard limits. The ratchet works where you can define a clean scalar metric — validation loss, benchmark accuracy, kernel throughput. Most intellectual work does not reduce to a single number, and the agents driving these loops exhibit what Karpathy described as “low creativity”: they tweak hyperparameters more than they explore novel structures.12 The capability is real and bounded.

The distribution question

The honest version of the democratization thesis requires asking who benefits today, and what would have to change for the answer to be broader.

Right now, running frontier-class models locally requires knowing what quantization schemes do, choosing between runtime frameworks, managing memory budgets, and troubleshooting when software overhead eats your throughput. The first beneficiaries of 70B-on-a-laptop are researchers, engineers, and developers who already understand the stack. The autoresearch ratchet requires writing a specification file and a training script. The distributed inference frameworks require configuring cluster topologies. Each layer of the stack that is now physically viable on consumer hardware carries its own expertise barrier.

History offers a calibration. The personal computer existed for a decade before graphical interfaces made it usable by non-programmers. The internet existed for twenty years as a research tool before the browser turned it into a consumer platform. In both cases, the hardware arrived years before the application layer that made it broadly accessible. The infrastructure was necessary. It was not sufficient.

Apple Intelligence represents one approach to that application layer — running smaller models through CoreML for system-level features like writing assistance and notification summaries. But Apple Intelligence operates on models an order of magnitude smaller than what the silicon can support, mediated by a framework that imposes 2–4× overhead on the operations most relevant to modern AI workloads.13 The distance between what Apple’s hardware can do and what Apple’s software exposes is the single largest source of wasted capacity in the stack. A rumored unified framework, potentially arriving at WWDC in June, could narrow that gap — but the report comes from a single source with no technical corroboration.14

The application layer that would turn hardware convergence into broad access does not exist yet. It would need to make choosing a model, loading it, and directing it toward a domain problem as invisible as the filesystem is to someone using a word processor. Not an inference playground for enthusiasts — an intelligence layer that a physician, a policy analyst, or a materials scientist can point at their own questions without understanding memory bandwidth or compression tradeoffs.

Pieces are moving in that direction. MLX is maturing. Metal 4’s new APIs give developers direct access to the neural accelerators in GPU cores. Persistent local agents that accumulate knowledge over time — compounding their usefulness rather than resetting with each session — demonstrate what a local AI surface could look like when the application layer catches up to the silicon.15 The building blocks exist. The integration does not.

The bet

The physics-driven convergence is real and accelerating. Model architectures are becoming more bandwidth-efficient because datacenter economics demand it, and as a side effect, they fit on consumer hardware. Apple’s silicon is becoming more inference-capable because the company has decided local AI is a primary workload, and as a side effect, frontier-class models can run on a desktop. Both trends are structural. Neither depends on the other. Neither is likely to reverse.

Within two product cycles, a $10,000 desktop will run models that today require multi-node GPU clusters costing four times as much.16 The M5 Ultra’s projected specifications — 80 neural accelerators, up to 512 GB of unified memory, roughly 1,200 GB/s of bandwidth — would put trillion-parameter models within reach of a single machine.2 The models that will run on that hardware are being designed now, optimized for bandwidth constraints that happen to match.

Whether this becomes a rising tide depends on what gets built above the silicon. The application layer. The quality ceiling of locally-runnable models. The question of whether local inference reaches only the technically fluent or becomes invisible infrastructure available to anyone with a hard problem and the patience to articulate it. The PC needed the GUI. The internet needed the browser. Local AI needs its equivalent, and nobody has shipped it yet.

The hardware convergence is the precondition, not the outcome. The full technical analysis traces the stack layer by layer, from the Neural Engine’s silicon architecture through framework overhead, model design, and autonomous experimentation — making each layer’s real behavior visible. What gets built on top of that stack is the open question, and it is the question that determines whether the accidental frontier stays an enthusiast’s achievement or becomes the infrastructure for a broader shift in who can do serious intellectual work with AI. I’m hoping the latter.

Footnotes

Qwen 3.5 technical report and model card: qwenlm.github.io/blog/qwen3.5. The 397B-A17B flagship activates 10 routed experts plus 1 shared expert out of 512 total per token. The 3:1 DeltaNet-to-attention ratio and the Mixture of Experts routing are both detailed in the technical report’s architecture section. ↩

M5 Ultra specifications are projected from the established 2× Max formula, corroborated by four independent streams: firmware leak exposing Mac Studio model J775d with chip identifier H17D; iOS 26.3 chip identifier T6052/H17D following Apple’s Ultra naming convention; Mark Gurman at Bloomberg narrowing the timeline to “middle of the year”; and Ming-Chi Kuo at TF International Securities forecasting N3P mass production with SoIC packaging. See From Transistor to Token, Act IV, for the full projection methodology. ↩ ↩2

Gated DeltaNet combines a decay gate (global memory clearing during context switches) with the delta rule (targeted surgical updates to specific key-value pairs — the mathematical equivalent of one step of online stochastic gradient descent applied to the model’s state on every token). The mechanism is detailed in the Qwen 3.5 technical report and independently in the Kimi Linear architecture paper. ↩

The 3:1 ratio finding comes from the Kimi Linear ablation study, which systematically tested DeltaNet-to-attention ratios and found 3:1 achieves the lowest validation loss. Qwen 3.5 adopted the same ratio. The convergence is empirical, not theoretically derived — a cautionary note, since MiniMax abandoned hybrid linear attention after quality degradation on complex multi-hop reasoning at scale. ↩

Apple M5 press release, March 2026. The Neural Engine gets one sentence tied to “Apple Intelligence” consumer features. The GPU Neural Accelerators receive the performance claims, the LM Studio name-drop, and the explicit connection between 614 GB/s bandwidth and LLM inference. Ben Weinbach at Creative Strategies provides the most detailed independent teardown of what this shift means architecturally: Creative Strategies analysis. ↩

Kimi Linear (approximately 3:1), RecurrentGemma (approximately 2:1), Granite 4.0 (pushing to 9:1), and RWKV-7 (eliminating full attention entirely) all arrived independently at hybrid or fully recurrent architectures. The common driver is the same: reducing per-token memory reads for inference efficiency. The diversity of ratios reflects the fact that the optimal tradeoff between recurrence and attention is empirical, not settled. ↩

Ziskind benchmark suite, the most rigorous independent measurements of M5 Max performance. Stream Triad testing measured 351 GB/s sustained memory throughput, 13% above M4 Max and exceeding the M3 Ultra desktop chip’s 337 GB/s. Decode-phase token generation benchmarks confirm the bandwidth-bound hierarchy: 65 tok/s on M5 Max versus 82 on M3 Ultra. See Ziskind benchmarks. ↩

MLX is Apple’s open-source ML research framework, achieving 20–30% higher throughput than llama.cpp on Apple Silicon via zero-copy unified memory access and optimized Metal compute shaders. The 22–32 tok/s projection for 70B models is derived from measured MLX advantages over llama.cpp’s GGUF-format benchmarks. See MLX on GitHub. ↩

Prefill benchmarks from MacStories M5 Max review. The 4× claim is independently corroborated by Ziskind: Gemma 34B at Q4 quantization measured 4,468 tok/s on M5 Max versus 1,855 on M4 Max — a laptop outperforming Apple’s own M3 Ultra desktop on compute-bound inference. ↩

Andrej Karpathy, autoresearch on GitHub. 630 lines of mutable Python training code, an immutable evaluation harness, and a Markdown specification file called

program.md. The git-based ratchet: branch from main, modifytrain.py, commit, train for five minutes, evaluate against validation bits-per-byte on a held-out set, merge if improved. The design philosophy — one GPU, one file, one metric — is deliberate: the system cannot hallucinate results because the metric is measured, not generated. ↩trevin-creator, autoresearch MLX port on GitHub. The depth-4 finding was emergent: shallower, wider layers with more optimizer steps outperform deeper networks when per-iteration throughput is lower. The same algorithm running on the ANE via private APIs (ncdrone’s autoresearch-ANE fork) found a third optimum: depth-6 at sequence length 512. Three compute targets on the same chip, three different optima. ↩ ↩2

The “low creativity” characterization comes from Karpathy himself, in GitHub Issue #22 on the autoresearch repository. The agent mostly tweaks hyperparameters rather than exploring novel architectures. Additional failure modes documented in From Transistor to Token, Act VI: a random seed incident where the agent’s “improvement” was evaluation-set overfitting, high crash rates (26 of 35 experiments on one M4 Mini run), and a 10-million-parameter scale ceiling within the five-minute training budget. ↩

CoreML overhead data from the Orion paper (arXiv:2603.06728), which cataloged 20 ANE restrictions, 14 previously undocumented. CoreML’s dispatch floor is roughly 0.095ms per operation; for a 256×256 matrix multiplication taking 0.006ms of actual ANE compute, CoreML’s overhead consumes 94% of wall-clock time. The maderix reverse-engineering project (GitHub) independently measured the ANE at 19 TFLOPS and 6.6 TFLOPS/W — 50–80× more energy-efficient than datacenter GPUs, most of it inaccessible through the public API. ↩

The “CoreAI” framework report comes from Mark Gurman at Bloomberg, March 2026. No framework binary, developer documentation, Xcode headers, or confirming job postings have surfaced. Developer Ronald Mannak’s widely-seen tweet connecting CoreML overhead findings to the rumored update captures community sentiment, but the ANEMLL project’s expert reaction is more cautious, calling for lower-level ANE access rather than another high-level unified API. ↩

Nous Research, Hermes Agent on GitHub. A persistent agent running via llama.cpp on Apple Silicon with a Qwen model, accumulating reusable skill documents over time. The entire stack — silicon, framework, model, agent — runs on one machine with no cloud dependency. The skill-document accumulation pattern mirrors the autoresearch ratchet: both move forward monotonically, both compound structured knowledge rather than resetting. ↩

Jeff Geerling’s December 2025 benchmarks on a cluster of four M3 Ultra Mac Studios with 1.5 TB total memory via EXO Labs’ distributed inference framework and RDMA over Thunderbolt 5: Qwen3 235B at 31.9 tok/s, DeepSeek V3.1 671B at 32.5 tok/s, Kimi K2 (1T parameters) at 28.3 tok/s. That four-node cluster costs roughly $40,000. The projected M5 Ultra single-machine specs would match or exceed these throughput numbers at one-quarter the cost. See Geerling’s benchmarks and EXO Labs on GitHub. ↩