Occupied Territory

How a venture capital network took control of the machinery of the state

Part 2 of the Wrong Axis Series

The standard revolving door moves people from government into industry. Executives serve a term in Washington, build relationships, then return to the private sector with a Rolodex and regulatory insight. The door revolves. The institution survives.

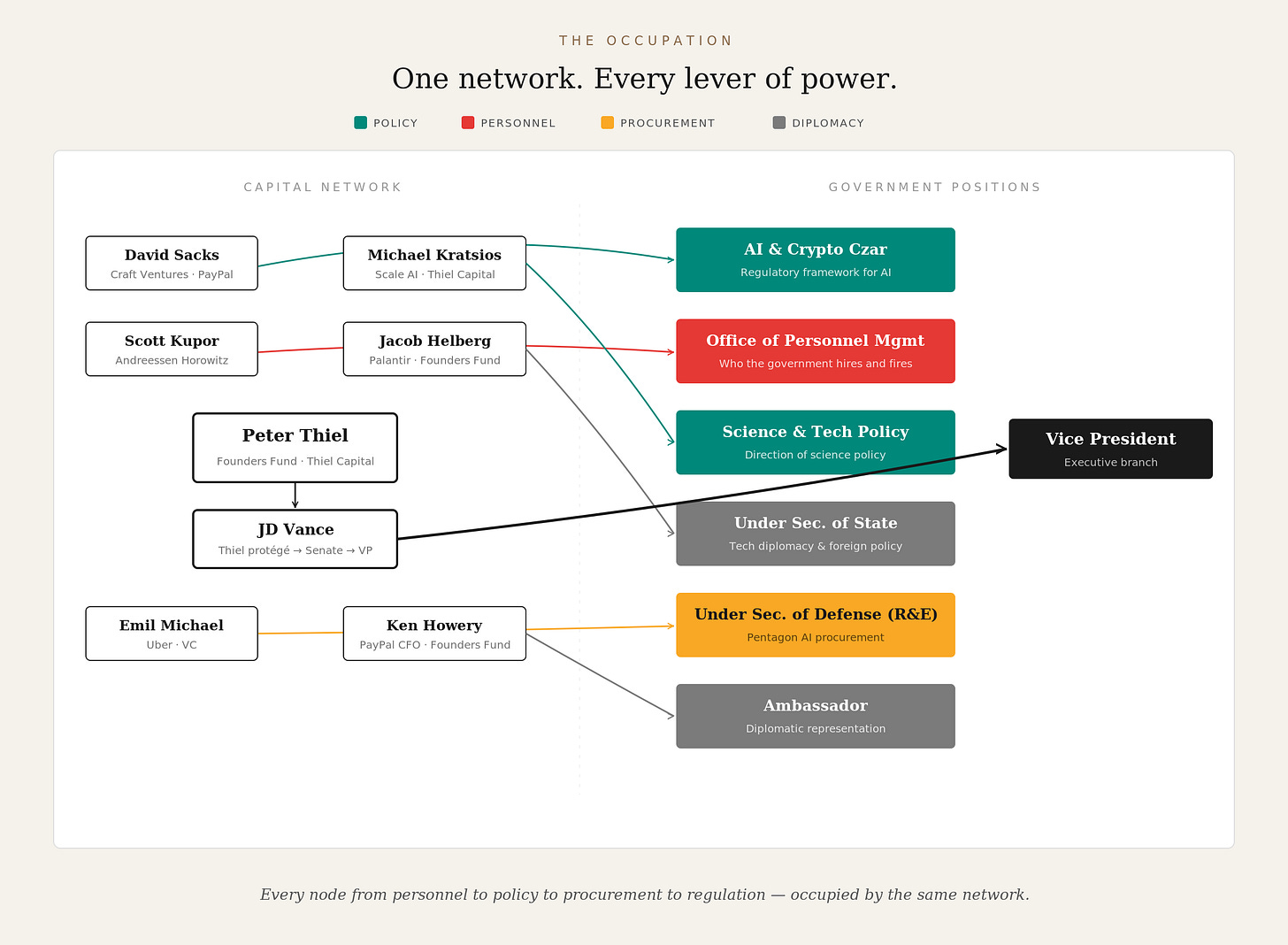

What is happening now runs in the opposite direction, and it is not a door. A political network rooted in the venture capital ecosystem has placed its members inside the federal government — not as advisors or donors, but as operators running the agencies that control AI procurement, federal hiring, science policy, and the regulatory framework for the technologies their own funds invest in.

The architecture traces to Peter Thiel. In 2017, he hired JD Vance at his investment firm. In 2019, he backed Vance’s own venture fund. In 2021, he introduced Vance to Trump at Mar-a-Lago. In 2022, he donated a record fifteen million dollars to Vance’s Ohio Senate campaign — the largest single donation to a Senate candidate in American history. In July 2024, Vance became the Vice Presidential nominee. Three links in a chain: investor to protégé, protégé to candidate, candidate to the second-highest office in the country.

From there, the placements follow the logic of the two-coalition map. David Sacks, Thiel’s former COO at PayPal and managing partner at Craft Ventures, a fund with an active AI portfolio, became the White House AI and Crypto Czar — setting policy for the sector his own investments depend on. Jacob Helberg, a Palantir senior adviser married to Founders Fund’s Keith Rabois, became Under Secretary of State. Emil Michael took the Under Secretary of War for Research and Engineering, the position that brokered the Pentagon AI contracts and blacklisted Anthropic for refusing to remove its surveillance and autonomous weapons restrictions.¹ Scott Kupor, managing partner at Andreessen Horowitz, became director of the Office of Personnel Management — the agency that controls who the federal government hires and fires. Michael Kratsios, who came up through Thiel Capital and most recently served as managing director at Scale AI, heads the Office of Science and Technology Policy. Ken Howery, PayPal’s founding CFO and Founders Fund partner, serves as Ambassador.

Notice what these positions control. OPM governs the civil service. OSTP shapes science policy. The Under Secretary of War controls defense technology procurement. The AI Czar sets the regulatory framework for the technologies his fund invests in. Every node in the chain from personnel to policy to procurement to regulation is occupied by someone from the same capital network. This is not influence. It is operation.

The capital map beneath these appointments complicates a clean two-coalition story, and the complication is load-bearing.

Founders Fund co-led Anthropic’s February 2026 Series G at $380 billion while simultaneously writing a billion-dollar check into Anduril’s latest round. Andreessen Horowitz holds positions in OpenAI, xAI, Mistral, and Safe Superintelligence while its American Dynamism practice has deployed over a billion dollars into defense companies including Anduril, Shield AI, and Castelion. At least twelve major investors — ICONIQ, Sequoia, Fidelity, BlackRock, General Catalyst among them — hold positions in both OpenAI and Anthropic. The hyperscalers bridge both worlds through equity stakes in frontier labs and infrastructure contracts with defense.

So the money flows everywhere. Why speak of two coalitions at all?

Because capital is fungible and values are not. What distinguishes the coalitions is whose values govern deployment: whether frontier AI systems will carry safety constraints and usage restrictions, or be made available without limitation to the national security apparatus. That distinction plays out not in funding rounds but in governance structures. General Paul Nakasone, former NSA Director, sits on OpenAI’s board and safety committee; Anthropic’s Long-Term Benefit Trust is structured to elect a majority of the company’s board — an independent body with no financial stake in the company’s commercial success. The Thiel-Sacks-Vance pipeline gives one coalition direct access to the executive branch; Anthropic’s public benefit corporation structure gives the other a legal obligation to stakeholders beyond shareholders. The most revealing gap in the capital map: Andreessen Horowitz, which invests across both coalitions, does not invest in Anthropic — the most safety-committed frontier lab. One a16z general partner has argued publicly that AI safety regulation’s “true purpose” is to suppress open-source and deter competitive startups. The absence is a thesis statement, not an oversight. And revenue dependency determines who can afford to say no: Anduril’s near-total reliance on DOD versus Anthropic’s $14 billion in commercial revenue — the financial independence that let it refuse the Pentagon contract and survive.

The financial trail behind the political appointments reinforces the point. Elon Musk spent $288 million on the 2024 election cycle. OpenAI president Greg Brockman and his wife donated $25 million to MAGA Inc. and another $25 million to Leading the Future, a pro-AI super PAC opposing state-level AI regulation — co-funded by Andreessen, Horowitz, and Palantir co-founder Joe Lonsdale. Sam Altman donated $1 million to Trump’s inaugural fund. In 2020, tech and finance billionaires gave $186 million more to Democrats than Republicans. By 2024, that flow had reversed: billionaires gave $509 million more to Republicans, with seventy percent of the hundred largest billionaire-family donations going to the GOP. The shift took roughly eighteen months.

A revolving door implies an institution that survives the rotation. What is happening now is not rotation but replacement, and the sequencing reveals the method.

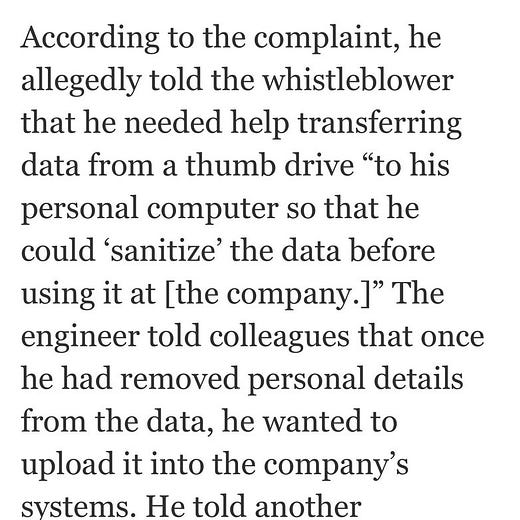

First, the administrative state’s capacity to resist was degraded. Musk’s Department of Government Efficiency executed mass reductions across regulatory agencies. OPM — now run by Kupor — implemented Schedule F, converting approximately fifty thousand career civil servants from merit-protected positions to at-will employees. An AI-driven tool scanned two hundred thousand federal regulations and flagged roughly half for elimination. ProPublica documented twenty-three DOGE operatives making cuts at agencies where they held prior financial interests. The Social Security Administration’s inspector general is now investigating allegations that a former DOGE engineer exfiltrated sensitive data on a thumb drive — and told colleagues he expected a presidential pardon if caught.³

Then the vacated positions and weakened agencies became available to fill and direct.

Regulatory capture, in the traditional sense, means an industry bends an institution to serve its interests while the institution continues to function. What is happening here has a different character. The administrative infrastructure is being dismantled, and the occupying force moves into the cleared space. The antibodies (career civil servants with institutional memory, inspectors general, regulatory enforcement staff) were eliminated before the colonization began.

The intellectual architecture for this program was supplied in advance, and it is worth pausing on because it connects the operational reality to the theory that produced it. Curtis Yarvin, writing as Mencius Moldbug, spent years articulating the case for treating the administrative state not as a tool to be reformed but as an enemy to be destroyed. His proposal was blunt: “Retire All Government Employees.” Thiel invested in Yarvin’s startup and called him “interesting and powerful.” Andreessen invested in his company and publicly promoted his work. Vance cited him directly. At Trump’s 2025 inauguration, Yarvin attended as what Politico described as an “informal guest of honor.” DOGE is Yarvin’s program with a budget and presidential authority. The intellectual blueprint and the operational demolition are the same project, separated only by the time it took to acquire the machinery to execute it.²

The coalition that placed these operatives arrived on the back of a populist electoral mandate. Seventy-seven million Americans voted for Donald Trump, many motivated by immigration enforcement, economic anxiety, and a conviction that institutions had stopped serving them. That last intuition — that the administrative state was broken — was not wrong. The conclusions drawn from it were.

The coal miner in West Virginia who voted to shake up Washington did not vote for Peter Thiel’s portfolio companies to set Pentagon AI procurement policy. The small business owner in Ohio who resented regulatory overreach did not vote for Andreessen Horowitz’s managing partner to control federal hiring. The veteran in Texas who distrusted the defense establishment did not vote for a former Uber executive to broker autonomous weapons contracts. Each figure is real, each grievance legitimate, and each was leveraged toward an outcome none of them would recognize as what they asked for.

The proxy war between the two coalitions is invisible to the electorate that enabled it. The appointments are public but functionally opaque. OPM, OSTP, Under Secretary of War for Research and Engineering are not offices that generate headlines. The voters who handed power to this coalition were promised an administrative state reformed in their interests. What they got was an administrative state dismantled so that a different set of private interests could occupy its remains. The populist base provided the political energy. The capital network provided the candidates, the money, and the appointments. The base got rhetoric. The network got the machinery.

Previous administrations’ revolving doors were reversible. A new president hires different people, appoints different regulators, shifts priorities. The door revolves. But the technologies coming online through this occupation create a different kind of problem — one that a subsequent election cannot undo.

Once autonomous weapons systems are deployed at hyperscale with configurable human oversight, they do not get recalled by the next administration. Once mass surveillance infrastructure is fused across classified networks under proprietary ontology mappings — and agencies discover, as the DIA did, that they “cannot run a competition” because they lack the software rights or documentation to share with competitors — it does not get un-fused. Once AI-driven processes replace career civil servants with institutional knowledge, that knowledge does not regenerate.

Each of these locks is already turning. And they turn in one direction.

The question this raises is not about the people currently holding these positions. Personnel change. Administrations end. The question is about the structure they are building while they hold the machinery, and whether that structure constrains what any subsequent government can do. That is the argument of Part 3: AI does not merely serve power. It ratchets it.

Until Tomorrow

Notes

¹ The Anthropic-Pentagon standoff is detailed in Part 1 of this series. The specific demand was revealing: the Pentagon’s overnight “best and final offer” asked Anthropic to delete language about “analysis of bulk acquired data” — the contractual prohibition on mass domestic surveillance. In an internal memo, Amodei wrote that this was “exactly the scenario we were most worried about.” When Anthropic refused, Trump directed every federal agency to cease using its technology; Hegseth designated it a supply-chain risk; and OpenAI announced a replacement deal the same evening.

² Yarvin’s key texts include “An Open Letter to Open-Minded Progressives” (2008) and the Unqualified Reservations blog. His framework treats democratic institutions as fundamentally illegitimate and proposes replacing them with corporate-style governance structures — a CEO-monarch running the state as a going concern. The through-line from this intellectual program to DOGE’s operational practice is direct.

³ The investigation is reported by the Washington Post. The engineer allegedly sought to transfer Social Security data to his personal computer for “sanitizing” before uploading it to the systems of a private company. A colleague who refused to assist cited legal concerns.